Brain

Source: Clark MA, Douglas M, Choi J (March 28, 2018). "35.3 The Central Nervous System". Biology 2e. Houston, Texas: OpenStax.[1] License: CC-BY-4.0.

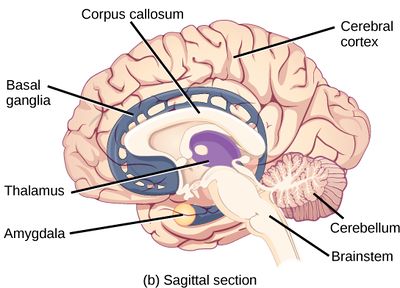

The brain is an organ that serves as the center of the nervous system in all vertebrate and most invertebrate animals. The brain is located in the head, usually close to the sensory organs for senses such as vision. It is divided into three parts: the brainstem, cerebellum and cerebrum. The brain and spinal cord make up the central nervous system (CNS).

The brain and spinal cord have their own immune system.[2] Tissue-resident macrophages, known as microglia, are a part of that immune system.[3]

The brain also has its own lymphatic system which links directly to the blood-borne immune system.[4]

Injury to the brain or spinal cord, such as those caused by stroke or trauma, result in a considerable weakening of the immune system.[5]

ME/CFS[edit | edit source]

Anatomical changes[edit | edit source]

Significant changes in white and gray matter volumes have frequently been found in patients with ME/CFS but no consistent pattern has been found.[6][7][8][9]

| Year | Authors | N | Criteria | Findings | Ref |

|---|---|---|---|---|---|

| 2004 | Okada, et al | 16 | Reduced gray-matter volume in the bilateral prefrontal cortex. Volume reduction in the right prefrontal cortex correlated with fatigue severity. | [10] | |

| 2016 | Shan, et al | Fukuda & CCC | Decreases in white matter, gray matter and blood volume deficits | [6] | |

| 2012 | Basant Puri, et al | Reduced grey matter volume in the occipital lobes, the right angular gyrus and the posterior division of the left parahippocampal gyrus. | [9] | ||

| 2014 | Zeineh, et al | Diminished white matter, white matter abnormalities in the right hemisphere. | [8] |

Blood flow[edit | edit source]

Several studies have ME/CFS patients have found evidence of reduced cerebral blood flow,[11][12][13][14][15][16][17][18] including the brainstem[12][13] and cerebral cortex.[15]

A 1995 study found hypoperfusion (reduced blood flow) to the brainstem in patients with ME/CFS.[12] In 2011, a study of brain involvement in CFS found "a strong correlation" between brainstem gray matter volume and pulse pressure, "suggesting impaired cerebrovascular autoregulation."[13]

A study of 429 ME/CFS patients by found that 90% ME/CFS patients had reduced cerebral blood flow with a head-up tilt test, even in the absence of Postural orthostatic tachycardia syndrome or Orthostatic hypotension.[19][20]

Metabolism[edit | edit source]

A 2003 study of cerebral glucose metabolism in 26 patients with chronic fatigue syndrome via 18-fluorodeoxyglucose positron emission tomography (FDG-PET) found evidence of hypometabolism (reduced glucose consumption) in approximately half of patients.[21] A 1998 PET study also found evidence of reduced metabolism in 18 patients.[22]

Patients with ME/CFS have also been found to have lower brain glutathione[11] and higher brain ventricular lactate.[11]

Abnormal distribution of acetyl-L-carnitine uptake, which is one of the biochemical markers of chronic fatigue syndrome, in the prefrontal cortex.[citation needed][10]

Inflammation and brain imaging[edit | edit source]

Whole-brain MRS markers of neuroinflammation have been found in ME/CFS.[23] fMRI images document neuroinflammation.[24]

In 2014, A Japanese positron emission tomography (PET) study looked at neuroinflammation in nine patients with ME/CFS and ten controls. They measured a protein expressed by activated microglia, and found that values in the cingulate cortex, hippocampus, amygdala, thalamus, midbrain, and pons were 45%–199% higher in ME/CFS patients than in healthy controls. The values in the amygdala, thalamus, and midbrain positively correlated with cognitive impairment score, the values in the cingulate cortex and thalamus positively correlated with pain score, and the value in the hippocampus positively correlated with depression score.[25]

In 2019, Mueller et al. investigated neuroinflammation, and found abnormalities affecting the whole brain rather than only part of the brain in ME/CFS patients.[23]

This study is the first to investigate whole-brain MRS markers of neuroinflammation in ME/CFS. We report metabolite and temperature abnormalities in ME/CFS patients in widely distributed brain areas, suggesting ME/CFS is driven by diffuse pathophysiological processes affecting the whole brain, rather than regionally limited, which is consistent with the heterogeneity of its clinical symptoms. Our findings add support to the hypothesis that ME/CFS is the result of chronic, low-level neuroinflammation. While the whole-brain results are preliminary, we note that they largely agree with past publications that use MRS in ME/CFS. These results should be replicated in future studies with larger samples to further establish the profile of pathophysiological abnormalities in the brains of ME/CFS patients. Ultimately, the development of sensitive MRI markers of ME/CFS could supplement clinical tests to help guide treatment decisions.[23]

Several neurochemicals have been studied in relation to ME patients. Myoinositol is thought to be involved in astrocyte function (Albrecht et al. 2016) and trended to be higher in ME patients compared to controls.[26]

N-acetylacetate (NAA) shows neuron density, which has been found in other neurological disorders[27]and has been shown to be lower in ME patients,[28][26]but this was not found in all studies.[29][30]

Choline is linked to activation of glia, loss of energy and expression of macrophages in the brain[27]and has been shown to change compared to controls.[28][26][30][31]

Lactate increases when more energy is being expended and has been shown to be higher than controls,[32][33][34][35]and significantly differs from lactate levels in people with psychological disorders.[32][35]Both ME patients and fibromyalgia patients were found to have similar levels of elevated lactate, so more tests would be needed to differentiate the two.[34]

Though contrasts were found between patients with ME and healthy controls in many of these biomarker studies, researchers are not sure what the changes mean.

Electrical activity[edit | edit source]

2016, A qEEG/LORETA study of nine controls and nine CFS patients (per DePaul Symptom Questionnaire (DSQ) and Canadian Consensus Criteria (CCC) definitions), found significantly decreased eLORETA source analysis oscillations in the occipital, parietal, posterior cingulate, and posterior temporal lobes in Alpha and Alpha-2. This research suggests that "disruptions in these regions and networks could be a neurobiological feature of the disorder, representing underlying neural dysfunction."[36]

2016, A qEEG/LORETA study of one CFS patient (per DSQ and CCC definitions), found deregulation of the functional connectivity networks. This may explain the common symptom of perceived cognitive deficits such as slow thinking, difficulty in reading comprehension, reduced learning and memory abilities and an overall feeling of being in a “fog".[37]

T2 Hyperintensities in MRI[edit | edit source]

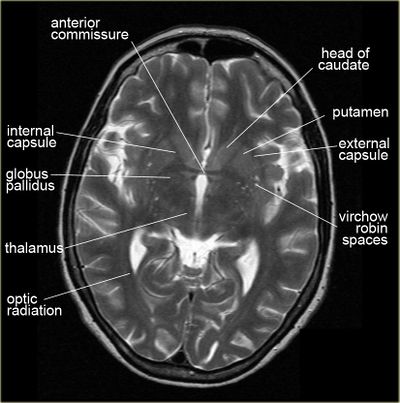

Possible white matter abnormalities of unknown etiology are found on MRIs of some ME/CFS patients.[6][38][39] White matter abnormalities identified by T2 hyperintensities might indicate lesions or abnormally dilated perivascular spaces (also known as Virchow-Robin spaces).[40]

- 1993, A comparison of brain MRI scans from 52 CFS patients and 52 controls found that 27% of CFS patients had findings considered abnormal, while only 2% of controls had findings considered abnormal. Abnormalities included T2 hyperintensities and ventricular enlargement.[38]

- 1999, A comparison of brain MRI scans from 39 CFS patients and 19 controls found that the 21 CFS patients who did not have a psychiatric diagnosis had significantly more T2 hyperintensities, compared to either controls or the 18 CFS patients with a psychiatric diagnosis.[39]

Since T2 hyperintensities are found in many different neurological conditions, some neurologists consider them to be diagnostically insignificant. Others point out that perhaps they should not be ignored, as they are correlated with cognitive disability and poor motor function.[41][42][43]

UNSORTED/unincorporated articles[edit | edit source]

- more abnormal spinal fluids[11], and psychiatric comorbidity does not influence any of these potential biological markers of CFS, [11]

- a subgroup of CFS patients with brain abnormalities may have an underlying encephalopathy producing their illness.[11]

- 2014, Brains of People With Chronic Fatigue Syndrome Offer Clues About Disorder By David Tuller - New York Times: Well[44]

- 2016, One six year longitudinal study found that Chronic Fatigue Syndrome (CFS) (meeting the Fukuda criteria and Canadian Consensus Criteria) is associated with decreases in white matter, gray matter and blood volume deficits in the brain as compared to healthy controls.[6] Full text)

- 2017, A study, using segmented anatomical MRI brain scans showed that, adjusting for total intracranial volume, CFS patients (as per Fukuda diagnostic criteria) had larger gray matter volume and lower white matter volume. The increased gray matter volume was predominantly found in the amygdala and insula cortex. The decreased white matter was predominantly found in the midbrain and temporal lobe.[45] - (Full text)

- 2020, Exercise alters brain activation in[46] (Full text) - see images folder

- 2022, Review of the Midbrain Ascending Arousal Network Nuclei[47] (Full text)

Chronic pain[edit | edit source]

In 2015, Loggia's team[48] successfully imaged neuroinflammation— specifically the activation of glial cells — in the brains of patients with chronic pain using a new imaging approach — a combination of magnetic resonance imaging (MRI) and positron emission tomography (PET), or MR/PET scanning.[49] MR/PET blends the structural and functional detail of tissues that an MRI gives with the sensitivity and metabolic function that PET scans provide.[49] Specifically, PET scanning detects the radiation given off by a substance injected into a person, called a radiotracer, following its distribution throughout the body.[49]

News and articles[edit | edit source]

- 2014, Brains of People With Chronic Fatigue Syndrome Offer Clues About Disorder - New York Times: Well (2014) - David Tuller

- 2015, | title = Important Link between the Brain and Immune System Found - Scientific American

- 2016, Six year study of abnormal brain changes in chronic fatigue syndrome patients - ME Australia

- 2016, A bug in fMRI software could invalidate 15 years of brain research - Science Alert

- 2016, Updated map of the human brain hailed as a scientific tour de force - The Guardian

- 2016, Updated brain map identifies 97 new areas - CNN

- 2016, Progressive Brain Changes in Patients with Chronic Fatigue Syndrome: Are our Brains Starved of Oxygen - #MEAction

- 2016, Case Study: "Brain Fog" in CFS can be seen in qEEG/Loreta - #MEAction

- 2018, Study Identifies the Types of Cognitive Dysfunction That Are Most Prevalent in Fibromyalgia - Fibromyalgia News Today

- 2018, Brain on Fire: Widespread Neuroinflammation Found in Chronic Fatigue Syndrome (ME/CFS) - Cort Johnson, HealthRising

- 2019, Brain Imaging and Behavior publication from Dr. Jarred Younger's SMCI Ramsay pilot - Solve ME/CFS

Talks and interviews[edit | edit source]

- 2018, ME/CFS Involves Brain Inflammation: Results from a Ramsay Pilot Study - Jarred Younger via Solve ME/CFS

Notable studies[edit | edit source]

- 2022, Review of the Midbrain Ascending Arousal Network Nuclei and Implications for Myalgic Encephalomyelitis/Chronic Fatigue Syndrome (ME/CFS), Gulf War Illness (GWI) and Postexertional Malaise (PEM)[47] - (Full text)

- 2020, Exercise alters brain activation in Gulf War Illness and Myalgic Encephalomyelitis/Chronic Fatigue Syndrome[46] - (Full text)

- 2019, Evidence of widespread metabolite abnormalities in Myalgic encephalomyelitis/chronic fatigue syndrome: assessment with whole-brain magnetic resonance spectroscopy.[23] - (Full text)

- 2019, Intra brainstem connectivity is impaired in chronic fatigue syndrome[50] - (Full text)

- 2018, Structural brain changes versus self-report: machine-learning classification of chronic fatigue syndrome patients[51] - (Abstract)

- 2018, Cortical hypoactivation during resting EEG suggests central nervous system pathology in patients with chronic fatigue syndrome[52](Abstract)

- 2018, Brain function characteristics of chronic fatigue syndrome: A task fMRI study[53] (Full Text)

- 2018, Neuroinflammation in the Brain of Patients with Myalgic Encephalomyelitis/Chronic Fatigue Syndrome[54]

- 2018, Hyperintense sensorimotor T1 spin echo MRI is associated with brainstem abnormality in chronic fatigue syndrome[55] (Full Text)

- 2018, Brain abnormalities in myalgic encephalomyelitis/chronic fatigue syndrome: Evaluation by diffusional kurtosis imaging and neurite orientation dispersion and density imaging[56] (Abstract)

- 2017, Multimodal and simultaneous assessments of brain and spinal fluid abnormalities in chronic fatigue syndrome and the effects of psychiatric comorbidity[11] - (Full text)

- 2017, CNS findings in chronic fatigue syndrome and a neuropathological case report[57] - (Full text)

- 2017, Grey and white matter differences in Chronic Fatigue Syndrome – A voxel-based morphometry study[45]

- 2016, Progressive brain changes in patients with chronic fatigue syndrome: A longitudinal MRI study[6] - (Full text)

- 2016, Relative increase in choline in the occipital cortex in chronic fatigue syndrome[29] - (Abstract)

- 2016, A multi-modal parcellation of human cerebral cortex - (Full text)[58]

- 2014, Right Arcuate Fasciculus Abnormality in Chronic Fatigue Syndrome[8] - (Abstract)

See also[edit | edit source]

- Brainstem

- Spine

- Central nervous system

- Neurology of ME/CFS

- List of abnormal findings in chronic fatigue syndrome and myalgic encephalomyelitis

Learn more[edit | edit source]

- Neuroquant Triage Brain Atrophy Report (MRI) - Provides physicians a quick reference and in-depth look on regional and global brain structure volumes, which could occur as a result of a brain injury or in neurodegenerative disease, by providing volume measurements of 44 brain structures for both the right and left hemisphere, total structure – all sorted by lobe and region. With a detailed table of intracranial volume and right, left and total values for normative percentile of ICV. Resulting values are automatically compared to gender and age-appropriate reference distribution.

- 2-Minute Neuroscience: Lobes and Landmarks of the Brain Surface (Lateral View) - Neuroscientifically Challenged (YouTube)

References[edit | edit source]

- ↑ Clark MA, Douglas M, Choi J (March 28, 2018). "35.3 The Central Nervous System". Biology 2e. Houston, Texas: OpenStax.

- ↑ Masuda, Takahiro; Sankowski, Roman; Staszewski, Ori; Böttcher, Chotima; Amann, Lukas; Sagar; Scheiwe, Christian; Nessler, Stefan; Kunz, Patrik (February 2019). "Spatial and temporal heterogeneity of mouse and human microglia at single-cell resolution". Nature. 566 (7744): 388–392. doi:10.1038/s41586-019-0924-x. ISSN 1476-4687.

- ↑ "Brain immune system is key to recovery from motor neuron degeneration: Results in study point to new approaches for ALS therapy". ScienceDaily. February 20, 2018. Retrieved March 31, 2019.

- ↑ Stetka, Bret (July 21, 2015). "Important Link between the Brain and Immune System Found". Scientific American. Retrieved March 31, 2019.

- ↑ "An interconnection between the nervous and immune system: Neuroendocrine reflex triggers infections". ScienceDaily. September 29, 2017. Retrieved March 31, 2019.

- ↑ 6.0 6.1 6.2 6.3 6.4 Shan, ZY; Kwiatek, R; Burnet, R; Del Fante, P; Staines, DR; Marshall-Gradisnik, SM; Barnden, LR (April 28, 2016). "Progressive brain changes in patients with chronic fatigue syndrome: A longitudinal MRI study". Journal of magnetic resonance imaging: JMRI. 44: 1301–1311. doi:10.1002/jmri.25283. PMID 27123773.

- ↑ Goldman, Bruce (October 28, 2014). "Study finds brain abnormalities in chronic fatigue patients". Stanford Medicine News Center.

- ↑ 8.0 8.1 8.2 Zeineh, Michael M; Kang, James; Atlas, Scott W; Raman, Mira M; Reiss, Allan L; Norris, Jane L; Valencia, Ian; Montoya, Jose G (October 29, 2014). "Right Arcuate Fasciculus Abnormality in Chronic Fatigue Syndrome". Radiology. 274 (2): 517–526. doi:10.1148/radiol.14141079.

- ↑ 9.0 9.1 Puri, BK; Jakeman, PM; Agour, M; Gunatilake, KDR; Fernando, KAC; Gurusinghe, AI; Treasaden, IH; Waldman, AD; Gishen, P (2012). "Regional grey and white matter volumetric changes in myalgic encephalomyelitis (chronic fatigue syndrome): a voxel-based morphometry 3 T MRI study". British Journal of Radiology. 85 (1015): e270-3. doi:10.1259/bjr/93889091.

- ↑ 10.0 10.1 Okada, Tomohisa; Tanaka, Masaaki; Kuratsune, Hirohiko; Watanabe, Yasuyoshi; Sadato, Norihiro (October 4, 2004). "Mechanisms underlying fatigue: a voxel-based morphometric study of chronic fatigue syndrome". BMC Neurology. 4 (1): 14. doi:10.1186/1471-2377-4-14. ISSN 1471-2377. PMC 524491. PMID 15461817.

- ↑ 11.0 11.1 11.2 11.3 11.4 11.5 11.6 Natelson, Benjamin; Mao, Xiangling; Stegner, Aaron J; Lange, Gudrun; Vu, Diana; Blate, Michelle; Kang, Guoxin; Soto, Eli; Kapusuz, Tolga; Shungu, Dikoma C (2017). "Multimodal and simultaneous assessments of brain and spinal fluid abnormalities in chronic fatigue syndrome and the effects of psychiatric comorbidity". Journal of the Neurological Sciences. 375: 411-416. doi:10.1016/j.jns.2017.02.046. PMC 5393352.

- ↑ 12.0 12.1 12.2 Costa, D.C.; Tannock, C.; Brostoff, J. (November 1995). "Brainstem perfusion is impaired in chronic fatigue syndrome". QJM: monthly journal of the Association of Physicians. 88 (11): 767–773. ISSN 1460-2725. PMID 8542261.

- ↑ 13.0 13.1 13.2 Barnden, Leighton R.; Crouch, Benjamin; Kwiatek, Richard; Burnet, Richard; Mernone, Anacleto; Chryssidis, Steve; Scroop, Garry; Fante, Peter Del (2011). "A brain MRI study of chronic fatigue syndrome: evidence of brainstem dysfunction and altered homeostasis". NMR in Biomedicine. 24 (10): 1302–1312. doi:10.1002/nbm.1692. ISSN 1099-1492. PMC 4369126. PMID 21560176.

- ↑ Biswal, Bharat; Kunwar, Pratap; Natelson, Benjamin H. (February 15, 2011). "Cerebral blood flow is reduced in chronic fatigue syndrome as assessed by arterial spin labeling". Journal of the Neurological Sciences. 301 (1): 9–11. doi:10.1016/j.jns.2010.11.018. ISSN 0022-510X.

- ↑ 15.0 15.1 Yoshiuchi, Kazuhiro; Farkas, Jeffrey; Natelson, Benjamin H. (2006). "Patients with chronic fatigue syndrome have reduced absolute cortical blood flow". Clinical Physiology and Functional Imaging. 26 (2): 83–86. doi:10.1111/j.1475-097X.2006.00649.x. ISSN 1475-097X.

- ↑ Freedman, M.; Kirsh, J.C.; Gray, B.; Chung, D.G.; Abbey, S.E.; Salit, I.E.; Ichise, M. (October 1992). "Assessment of regional cerebral perfusion by 99Tcm-HMPAO SPECT in chronic fatigue syndrome". Nuclear medicine communications. 13 (10): 767–772. ISSN 0143-3636. PMID 1491843.

- ↑ Chao, C.C.; Hu, S.; Pheley, A.M.; Schenck, C.H.; Grammith, F.C.; Sirr, S.A.; Peterson, P.K. (March 1, 1994). "Effects of mild exercise on cytokines and cerebral blood flow in chronic fatigue syndrome patients". Clinical and Diagnostic Laboratory Immunology. 1 (2): 222–226. ISSN 1071-412X. PMID 7496949.

- ↑ Lange, Gudrun; Wang, Samuel; DeLuca, John; Natelson, Benjamin H. (September 28, 1998). "Neuroimaging in chronic fatigue syndrome". The American Journal of Medicine. 105 (3, Supplement 1): 50S–53S. doi:10.1016/S0002-9343(98)00175-2. ISSN 0002-9343.

- ↑ CMRC Conference 2017 day 1- Prof Dr Frans Visser, retrieved September 3, 2019

- ↑ van Campen, C. (Linda) M.C.; Verheugt, Freek W.A.; Rowe, Peter C.; Visser, Frans C. (January 1, 2020). "Cerebral blood flow is reduced in ME/CFS during head-up tilt testing even in the absence of hypotension or tachycardia: A quantitative, controlled study using Doppler echography". Clinical Neurophysiology Practice. 5: 50–58. doi:10.1016/j.cnp.2020.01.003. ISSN 2467-981X.

- ↑ Bartenstein, P.; Egle, U.T.; Schreckenberger, M.; Hardt, J.; Nix, W.A.; Siessmeier, T. (July 1, 2003). "Observer independent analysis of cerebral glucose metabolism in patients with chronic fatigue syndrome". Journal of Neurology, Neurosurgery & Psychiatry. 74 (7): 922–928. doi:10.1136/jnnp.74.7.922. ISSN 0022-3050. PMID 12810781.

- ↑ Tirelli, Umberto; Chierichetti, Franca; Tavio, Marcello; Simonelli, Cecilia; Bianchin, Gianluigi; Zanco, Pierluigi; Ferlin, Giorgio (September 28, 1998). "Brain positron emission tomography (PET) in chronic fatigue syndrome: preliminary data". The American Journal of Medicine. 105 (3, Supplement 1): 54S–58S. doi:10.1016/S0002-9343(98)00179-X. ISSN 0002-9343.

- ↑ 23.0 23.1 23.2 23.3 Mueller, Christina; Lin, Joanne C; Sheriff, Sulaiman; Maudsley, Andrew A; Younger, Jarred W (2019). "Evidence of widespread metabolite abnormalities in Myalgic encephalomyelitis/chronic fatigue syndrome: assessment with whole-brain magnetic resonance spectroscopy". Brain Imaging and Behavior. 14: 562–572. doi:10.1007/s11682-018-0029-4.

- ↑ Zeineh, Michael M; Kang, James; Atlas, Scott W; Raman, Mira M; Reiss, Allan L; Norris, Jane L; Valencia, Ian; Montoya, Jose G (October 29, 2014). "Right Arcuate Fasciculus Abnormality in Chronic Fatigue Syndrome". Radiology. 274 (2): 517–526. doi:10.1148/radiol.14141079.

- ↑ Nakatomi, Yasuhito; Mizuno, Kei; Ishii, Akira; Wada, Yasuhiro; Tanaka, Masaaki; Tazawa, Shusaku; Onoe, Kayo; Fukuda, Sanae; Kawabe, Joji; Takahashi, Kazuhiro; Kataoka, Yosky; Shiomi, Susumu; Yamaguti, Kouzi; Inaba, Masaaki; Kuratsune, Hirohiko; Watanabe, Yasuyoshi (March 24, 2014), "Neuroinflammation in Patients with Chronic Fatigue Syndrome/Myalgic Encephalomyelitis: An ¹¹C-(R)-PK11195 PET Study", Journal of Nuclear Medicine, 55 (6): 945-50, doi:10.2967/jnumed.113.131045, PMID 24665088

- ↑ 26.0 26.1 26.2 Brooks, J.C.; Roberts, N.; Whitehouse, G.; Majeed, T. (November 2000). "Proton magnetic resonance spectroscopy and morphometry of the hippocampus in chronic fatigue syndrome". The British Journal of Radiology. 73 (875): 1206–1208. doi:10.1259/bjr.73.875.11144799. ISSN 0007-1285. PMID 11144799.

- ↑ 27.0 27.1 Albrecht, Daniel S.; Granziera, Cristina; Hooker, Jacob M.; Loggia, Marco L. (April 20, 2016). "In Vivo Imaging of Human Neuroinflammation". ACS chemical neuroscience. 7 (4): 470–483. doi:10.1021/acschemneuro.6b00056. ISSN 1948-7193. PMC 5433433. PMID 26985861.

- ↑ 28.0 28.1 Chaudhuri, A.; Condon, B.R.; Gow, J.W.; Brennan, D.; Hadley, D.M. (February 10, 2003). "Proton magnetic resonance spectroscopy of basal ganglia in chronic fatigue syndrome". Neuroreport. 14 (2): 225–228. doi:10.1097/01.wnr.0000054960.21656.64. ISSN 0959-4965. PMID 12598734.

- ↑ 29.0 29.1 Puri, B.K.; Counsell, S.J.; Zaman, R.; Main, J.; Collins, A.G.; Hajnal, J.V.; Davey, N.J. (November 2002). "Relative increase in choline in the occipital cortex in chronic fatigue syndrome". Acta Psychiatrica Scandinavica. 106 (3): 224–226. ISSN 0001-690X. PMID 12197861.

- ↑ 30.0 30.1 Tomoda, A.; Miike, T.; Yamada, E.; Honda, H.; Moroi, T.; Ogawa, M.; Ohtani, Y.; Morishita, S. (January 2000). "Chronic fatigue syndrome in childhood". Brain & Development. 22 (1): 60–64. ISSN 0387-7604. PMID 10761837.

- ↑ Puri, B.K.; Agour, M.; Gunatilake, K.D.R.; Fernando, K.A.C.; Gurusinghe, A.I.; Treasaden, I.H. (November 2009). "An in vivo proton neurospectroscopy study of cerebral oxidative stress in myalgic encephalomyelitis (chronic fatigue syndrome)". Prostaglandins, Leukotrienes, and Essential Fatty Acids. 81 (5–6): 303–305. doi:10.1016/j.plefa.2009.10.002. ISSN 1532-2823. PMID 19906518.

- ↑ 32.0 32.1 Mathew, Sanjay J.; Mao, Xiangling; Keegan, Kathryn A.; Levine, Susan M.; Smith, Eric L.P.; Heier, Linda A.; Otcheretko, Viktor; Coplan, Jeremy D.; Shungu, Dikoma C. (April 2009). "Ventricular cerebrospinal fluid lactate is increased in chronic fatigue syndrome compared with generalized anxiety disorder: an in vivo 3.0 T (1)H MRS imaging study". NMR in biomedicine. 22 (3): 251–258. doi:10.1002/nbm.1315. ISSN 0952-3480. PMID 18942064.

- ↑ Shungu, Dikoma C.; Weiduschat, Nora; Murrough, James W.; Mao, Xiangling; Pillemer, Sarah; Dyke, Jonathan P.; Medow, Marvin S.; Natelson, Benjamin H.; Stewart, Julian M. (September 2012). "Increased ventricular lactate in chronic fatigue syndrome. III. Relationships to cortical glutathione and clinical symptoms implicate oxidative stress in disorder pathophysiology". NMR in biomedicine. 25 (9): 1073–1087. doi:10.1002/nbm.2772. ISSN 1099-1492. PMC 3896084. PMID 22281935.

- ↑ 34.0 34.1 Natelson, Benjamin H.; Vu, Diana; Coplan, Jeremy D.; Mao, Xiangling; Blate, Michelle; Kang, Guoxin; Soto, Eli; Kapusuz, Tolga; Shungu, Dikoma C. (2017). "Elevations of Ventricular Lactate Levels Occur in Both Chronic Fatigue Syndrome and Fibromyalgia". Fatigue: Biomedicine, Health & Behavior. 5 (1): 15–20. doi:10.1080/21641846.2017.1280114. ISSN 2164-1846. PMC 5754037. PMID 29308330.

- ↑ 35.0 35.1 Murrough, James W.; Mao, Xiangling; Collins, Katherine A.; Kelly, Chris; Andrade, Gizely; Nestadt, Paul; Levine, Susan M.; Mathew, Sanjay J.; Shungu, Dikoma C. (July 2010). "Increased ventricular lactate in chronic fatigue syndrome measured by 1H MRS imaging at 3.0 T. II: comparison with major depressive disorder". NMR in biomedicine. 23 (6): 643–650. doi:10.1002/nbm.1512. ISSN 1099-1492. PMID 20661876.

- ↑ Zinn, Marcie; Zinn, Mark; Jason, Leonard (2016), "Intrinsic Functional Hypoconnectivity in Core Neurocognitive Networks Suggests Central Nervous System Pathology in Patients with Myalgic Encephalomyelitis: A Pilot Study", Applied Psychophysiology and Biofeedback, 41 (3): 283-300, doi:10.1007/s10484-016-9331-3, PMID 26869373

- ↑ 37.0 37.1 Zinn, Marcie L; Zinn, Mark A.; Jason, Leonard (2016). "qEEG / LORETA in Assessment of Neurocognitive Impairment in a Patient with Chronic Fatigue Syndrome: A Case Report". sciforschenonline.org. SciForschen. doi:10.16966/2469-6714.110. ISSN 2469-6714. Retrieved August 28, 2018.

- ↑ 38.0 38.1 Natelson, B.H.; Cohen, J.M.; Brassloff, I.; Lee, H.J. (December 15, 1993). "A controlled study of brain magnetic resonance imaging in patients with the chronic fatigue syndrome". Journal of the Neurological Sciences. 120 (2): 213–217. doi:10.1016/0022-510x(93)90276-5. ISSN 0022-510X. PMID 8138812.

- ↑ 39.0 39.1 Lange, G.; DeLuca, J.; Maldjian, J.A.; Lee, H.; Tiersky, L.A.; Natelson, B.H. (December 1, 1999). "Brain MRI abnormalities exist in a subset of patients with chronic fatigue syndrome". Journal of the Neurological Sciences. 171 (1): 3–7. doi:10.1016/s0022-510x(99)00243-9. ISSN 0022-510X. PMID 10567042.

- ↑ Kwee, Robert M.; Kwee, Thomas C. (July 1, 2007). "Virchow-Robin Spaces at MR Imaging". RadioGraphics. 27 (4): 1071–1086. doi:10.1148/rg.274065722. ISSN 0271-5333.

- ↑ Jeong, Eun Hye; Lee, Yong Joo; Kim, Sang Joon; Lee, Jae-Hong (2015). "Is the Severity of Dilated Virchow-Robin Spaces Associated with Cognitive Dysfunction?". Dementia and Neurocognitive Disorders. 14 (3): 114. doi:10.12779/dnd.2015.14.3.114. ISSN 1738-1495.

- ↑ Sachdev, P.S.; Wen, W.; Christensen, H.; Jorm, A.F. (March 1, 2005). "White matter hyperintensities are related to physical disability and poor motor function". Journal of Neurology, Neurosurgery & Psychiatry. 76 (3): 362–367. doi:10.1136/jnnp.2004.042945. ISSN 0022-3050. PMID 15716527.

- ↑ Paradise, Matthew; Crawford, John D.; Lam, Ben C.P.; Wen, Wei; Kochan, Nicole A.; Makkar, Steve; Dawes, Laughlin; Trollor, Julian; Draper, Brian (January 27, 2021). "The Association of Dilated Perivascular Spaces with Cognitive Decline and Incident Dementia". Neurology. doi:10.1212/WNL.0000000000011537. ISSN 0028-3878. PMID 33504642.

- ↑ Tuller, David (November 24, 2014). "Brains of People With Chronic Fatigue Syndrome Offer Clues About Disorder". NY Times.

- ↑ 45.0 45.1 Finkelmeyer, Andreas; He, Jiabao; Maclachlan, Laura; Watson, Stuart; Gallagher, Peter; Newton, Julia L.; Blamire, Andrew M. (2018). "Grey and white matter differences in Chronic Fatigue Syndrome – A voxel-based morphometry study". NeuroImage: Clinical. 17: 24–30. doi:10.1016/j.nicl.2017.09.024. PMID 29021956.

- ↑ 46.0 46.1 Washington, Stuart D.; Rayhan, Rakib U.; Garner, Richard; Provenzano, Destie; Zajur, Kristina; Addiego, Florencia Martinez; VanMeter, John W.; Baraniuk, James N. (July 1, 2020). "Exercise alters brain activation in Gulf War Illness and Myalgic Encephalomyelitis/Chronic Fatigue Syndrome". Brain Communications. 2 (2). doi:10.1093/braincomms/fcaa070.

- ↑ 47.0 47.1 Baraniuk, James N. (February 2022). "Review of the Midbrain Ascending Arousal Network Nuclei and Implications for Myalgic Encephalomyelitis/Chronic Fatigue Syndrome (ME/CFS), Gulf War Illness (GWI) and Postexertional Malaise (PEM)". Brain Sciences. 12 (2): 132. doi:10.3390/brainsci12020132. ISSN 2076-3425. PMC 8870178. PMID 35203896.

- ↑ Loggia, Marco L.; Chonde, Daniel B.; Akeju, Oluwaseun; Arabasz, Grae; Catana, Ciprian; Edwards, Robert R.; Hill, Elena; Hsu, Shirley; Izquierdo-Garcia, David (January 8, 2015). "Evidence for brain glial activation in chronic pain patients". Brain. 138 (3): 604–615. doi:10.1093/brain/awu377. ISSN 1460-2156.

- ↑ 49.0 49.1 49.2 Inacio, Patricia (October 11, 2018). "In Fibromyalgia Patients, Brain Inflammation Imaged for First Time in Study". Fibromyalgia News Today. Retrieved October 30, 2018.

- ↑ Barnden, Leighton R; Shan, Zack Y; Staines, Donald R; Marshall-Gradisnik, Sonya; Finegan, Kevin; Ireland, Timothy; Bhuta, Sandeep (October 19, 2019). "Intra brainstem connectivity is impaired in chronic fatigue syndrome". NeuroImage: Clinical. 24: 102045. doi:10.1016/j.nicl.2019.102045. ISSN 2213-1582.

- ↑ Staud, Roland; Robinson, Michael E.; Letzen, Janelle E.; Boissoneault, Jeff; Sevel, Landrew S. (August 1, 2018). "Structural brain changes versus self-report: machine-learning classification of chronic fatigue syndrome patients". Experimental Brain Research. 236 (8): 2245–2253. doi:10.1007/s00221-018-5301-8. ISSN 1432-1106. PMID 29846797.

- ↑ Zinn, Mark A.; Zinn, Marcie L.; Valencia, Ian; Jason, Leonard A.; Montoya, Jose G. (2018), "Cortical hypoactivation during resting EEG suggests central nervous system pathology in patients with chronic fatigue syndrome", Biological Psychology, 136 (1): 87-99, doi:10.1016/j.biopsycho.2018.05.016

- ↑ 53.0 53.1 Shan, Zack Y.; Finegan, Kevin; Bhuta, Sandeep; Ireland, Timothy; Staines, Donald R.; Marshall-Gradisnik, Sonya M.; Barnden, Leighton R. (January 1, 2018). National Centre for Neuroimmunology and Emerging Diseases; Medical Imaging Dept., Gold Coast Hosp. "Brain function characteristics of chronic fatigue syndrome: A task fMRI study". NeuroImage: Clinical. 19: 279–286. doi:10.1016/j.nicl.2018.04.025. ISSN 2213-1582 – via Elsevier.

- ↑ Nakatomi, Y; Kuratsune, H; Watanabe, Y (2018). "Neuroinflammation in the Brain of Patients with Myalgic Encephalomyelitis/Chronic Fatigue Syndrome". Brain Nerve. 70 (1): 19-25. doi:10.11477/mf.1416200945. PMID 29348371.

- ↑ Barnden, Leighton R.; Shan, Zack Y.; Staines, Donald R.; Marshall-Gradisnik, Sonya; Finegan, Kevin; Ireland, Timothy; Bhuta, Sandeep (2018). "Hyperintense sensorimotor T1 spin echo MRI is associated with brainstem abnormality in chronic fatigue syndrome". NeuroImage: Clinical. 20: 102–109. doi:10.1016/j.nicl.2018.07.011.

- ↑ Kimura, Yukio; Sato, Noriko; Ota, Miho; Shigemoto, Yoko; Morimoto, Emiko; Enokizono, Mikako; Matsuda, Hiroshi; Shin, Isu; Amano, Keiko (November 14, 2018). "Brain abnormalities in myalgic encephalomyelitis/chronic fatigue syndrome: Evaluation by diffusional kurtosis imaging and neurite orientation dispersion and density imaging". Journal of Magnetic Resonance Imaging. doi:10.1002/jmri.26247. ISSN 1053-1807.

- ↑ Ferrero, Kimberly; Silver, Mitchell; Cocchetto, Alan; Masliah, Eliezer; Langford, Dianne (2017). "CNS findings in chronic fatigue syndrome and a neuropathological case report". Journal of Investigative Medicine. 65 (6): 974–983. doi:10.1136/jim-2016-000390.

- ↑ Glasser, Matthew F.; Coalson, Timothy S.; Robinson, Emma C.; Hacker, Carl D.; Harwell, John; Yacoub, Essa; Ugurbil, Kamil; Andersson, Jesper; Beckmann, Christian F. (July 20, 2016). "A multi-modal parcellation of human cerebral cortex". Nature. 536 (7615): 171–178. doi:10.1038/nature18933. ISSN 0028-0836.